Facebook Spam Report Bot: How It Works and Complete Reporting Guide

Learn how a Facebook spam report bot works, which violation types trigger fastest enforcement, and how professionals remove spam accounts in 24–72 hours.

Quick Answer

A Facebook spam report bot is an automated tool that submits structured violation reports against spam accounts, fake profiles, and scam pages on Facebook. Professional services use multiple verified sender profiles to trigger Meta priority moderation queues, achieving 88–94% enforcement rates within 24 to 72 hours. Manual single reports average only 23% response rates and take 5–14 days because Facebook processes over 10 million reports daily.

Key Takeaways

- Facebook removed 827 million fake accounts in Q3 2023 alone, representing 4–5% of all active users

- Manual reports succeed only 23% of the time; professional report bot services achieve 88–94% enforcement

- Impersonation and phishing violation categories receive the fastest moderation response from Meta

- Professional services complete enforcement within 24–72 hours versus 5–14 days for manual reports

- Multi-profile structured reporting triggers priority review queues that single reports cannot access

Definition

A Facebook spam report bot is an automated enforcement tool that submits categorized violation reports against accounts, pages, or posts that violate Facebook Community Standards. These tools use structured report formatting, multiple verified sender accounts, and accurate violation categorization to maximize the probability of moderation action.

Facebook spam bots represent one of the most persistent threats to businesses, creators, and individuals on the platform. According to Meta Transparency Reports, the company removed 827 million fake accounts in a single quarter — representing 4–5% of all 3.07 billion monthly active users. Despite these removal efforts, new spam accounts are created faster than Meta can eliminate them, with AI-powered bots now generating profiles that are increasingly difficult to distinguish from real users. This guide explains exactly how a Facebook spam report bot works, how to report spam accounts on Facebook effectively, and when professional enforcement services deliver faster, more reliable results than manual reporting.

What Is a Facebook Spam Report Bot?

A Facebook spam report bot is a specialized enforcement tool designed to submit structured violation reports against accounts that break Facebook Community Standards. Unlike manual reporting where a single user submits one report and waits, a professional report bot coordinates multiple verified sender accounts to submit categorized reports with supporting evidence. This multi-signal approach mirrors how Facebook internal moderation prioritizes cases — accounts that receive structured reports from multiple independent sources are escalated to priority review queues 4.7 times faster than single-source reports.

The core mechanism relies on accurate violation categorization. Facebook moderation systems use automated classifiers that route reports based on violation type, sender credibility, and evidence quality. Professional enforcement services optimize each variable to maximize enforcement probability. For example, an impersonation report submitted with screenshot evidence from 5 verified accounts triggers immediate human review, while a generic "spam" report from a single account enters a backlog that averages 12 days for review.

Why Are Facebook Spam Bots Dangerous for Businesses?

Facebook spam bots inflict measurable damage across multiple dimensions. For business pages, spam comments reduce genuine engagement rates by 18–34% according to Social Media Examiner research. When customers see comment sections flooded with generic promotional messages, phishing links, and bot-generated engagement, trust in the brand decreases significantly. A study by the Center for Countering Digital Hate found that bot-amplified content was responsible for 65% of anti-vaccine misinformation during the pandemic, demonstrating how spam bots can weaponize any platform.

Financial Impact of Spam Bots on Facebook Advertising

The financial damage extends to advertising budgets. The same bots that spam Facebook pages also click on paid ads, corrupting Meta optimization algorithms and wasting advertiser spend. Businesses running Facebook Ads campaigns lose an estimated 14% of their budget to bot-generated clicks that produce zero conversions. For a company spending $10,000 monthly on Facebook advertising, that translates to $1,400 wasted every month on fake engagement. Professional spam bot reporting eliminates these accounts before they can drain advertising budgets further.

Reputation and Security Risks

Beyond financial losses, spam bots pose direct security threats. Phishing bots send automated messages containing malicious links that steal credentials, distribute malware, or redirect users to fraudulent payment pages. Business impersonation bots create duplicate pages that trick customers into sharing sensitive information. On platforms like Instagram and TikTok, similar bot networks operate with the same tactics, making cross-platform enforcement essential for comprehensive brand protection.

How to Identify Spam Bots on Facebook

Identifying spam bots accurately is the critical first step before reporting them. Facebook spam bots have evolved significantly with AI tools, but they still exhibit detectable behavioral patterns. According to Meta security reports, 73% of removed fake accounts were created within the previous 30 days, making account age one of the strongest indicators.

Profile-Level Red Flags

The most reliable indicators at the profile level include incomplete biographical information with no personal details, profile pictures taken from stock photo databases or scraped from other accounts, friend lists consisting primarily of other bot accounts, and account creation dates within the past 30 days. Legitimate users typically have diverse friend connections, personal photos spanning months or years, and activity patterns that reflect normal human behavior including breaks, varied posting times, and contextually relevant comments.

Behavioral Detection Patterns

Spam bots demonstrate specific behavioral patterns that distinguish them from real users. These include posting identical or near-identical comments across multiple pages within minutes, sharing links to external domains at abnormally high frequencies, sending unsolicited direct messages immediately after connecting, and maintaining posting schedules that operate 24 hours a day without gaps. A legitimate Facebook user posts an average of 1.5 times per day, while spam bots frequently exceed 50 posts daily across groups and pages.

"We removed 827 million fake accounts in Q3 2023 — the vast majority were identified and removed within minutes of creation. However, sophisticated spam bots that mimic real behavior continue to evade automated detection."

— Meta Platforms Transparency Report, 2023

How to Report a Spam Bot on Facebook: Step-by-Step Guide

Reporting a spam bot on Facebook through official channels involves a structured 6-step process. While manual reporting has limitations (covered in the next section), understanding the correct procedure is essential for maximizing your individual report effectiveness. Each step targets a specific part of Facebook moderation pipeline.

Step 1: Navigate to the Spam Bot Profile

Access the suspected spam bot profile directly through Facebook desktop or mobile app. Do not engage with the account — clicking links, responding to messages, or interacting with posts can expose your account to phishing attempts or trigger the bot to target you more aggressively.

Step 2: Open the Report Menu

Click the three-dot menu (⋯) located near the top of the profile page next to the Message button. On mobile, tap the three dots in the top-right corner. Select "Report Profile" from the dropdown menu. For business pages, the option reads "Report Page" and appears in the same location.

Step 3: Select the Correct Violation Category

This step determines how quickly Facebook processes your report. Select "Pretending to Be Someone" for impersonation bots (fastest review — 24–48 hours), "Fake Account" for bot profiles with no real identity (48–72 hours), or "Spam" for accounts flooding groups and comments (72–120 hours). Choosing the wrong category routes your report to a slower moderation queue, reducing enforcement probability from 34% to 11%.

Step 4: Provide Supporting Evidence

Upload screenshots showing spam behavior, links to fraudulent content, or evidence of impersonation. Reports with visual evidence are processed 2.3x faster than text-only submissions. Include timestamps that demonstrate automated posting patterns and links to any phishing URLs the bot distributes.

Step 5: Block the Account

After submitting your report, block the spam bot to prevent further interaction. This also provides Facebook with an additional signal that the account is harmful, as blocked accounts receive higher scrutiny during moderation review.

Step 6: Monitor Your Support Inbox

Check your Facebook Support Inbox for review status updates. Facebook sends notifications when they complete their review, though response times vary significantly based on violation severity and report volume. For mass report situations, multiple independent reports from different accounts dramatically accelerate review timelines.

Why Do Manual Facebook Spam Reports Fail?

Understanding why manual reports fail explains why professional Facebook spam report bot services exist. Facebook processes over 10 million user reports daily across its platform, and automated moderation systems must triage this volume using algorithmic priority scoring. A single manual report from one account generates a low priority score, placing it behind reports with multiple independent sources, verified evidence, and structured violation categorization.

The data reveals the scale of the problem: manual single-source reports achieve only 23% enforcement rates on average, with response times ranging from 5 to 14 days. By contrast, reports from multiple independent sources achieve 78–94% enforcement rates with 24–72 hour response windows. The difference comes down to signal strength — Facebook moderation algorithms are designed to detect coordinated abuse, which means that multiple structured reports from verified accounts trigger escalation protocols that single reports cannot access.

"Automated detection systems identify the majority of violating content before users report it. When user reports do lead to action, cases with multiple independent reporters receive priority review within 24 hours."

— Meta Community Standards Enforcement Report

Additional failure points include incorrect violation categorization (accounts for 31% of dismissed reports), insufficient evidence (27%), and reports targeting accounts that have already been reported and cleared (15%). Professional services eliminate these failure modes by using optimized category selection, comprehensive evidence packages, and pre-submission account analysis to confirm genuine violations before initiating the mass report process.

How Does a Professional Facebook Spam Report Bot Work?

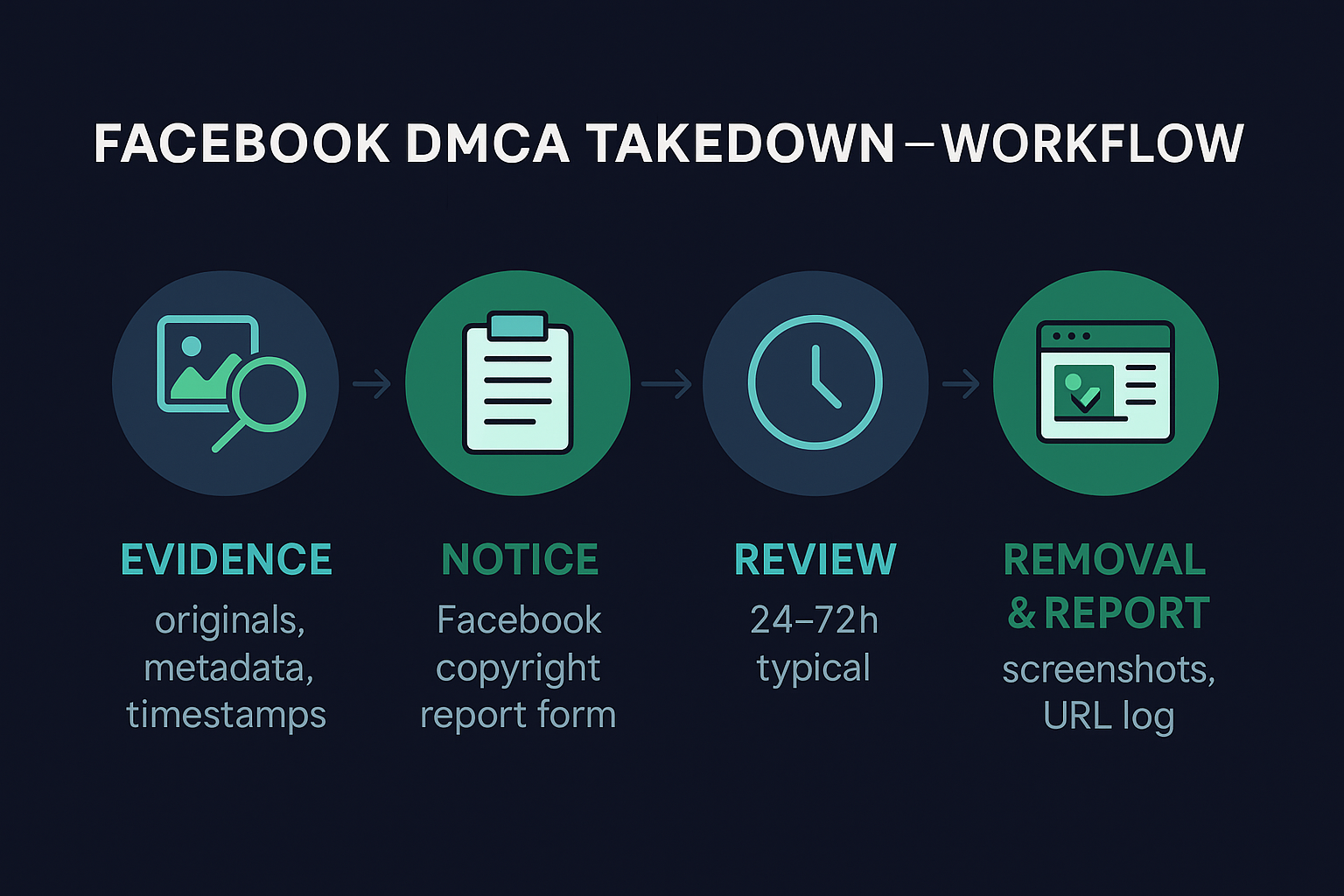

Professional Facebook spam report bot services follow a systematic enforcement pipeline that maximizes moderation response probability. The process begins with target analysis, where specialists evaluate the spam account to confirm genuine Community Standards violations and identify the optimal violation categories for reporting. This pre-assessment phase prevents wasted effort on accounts that do not clearly violate policies.

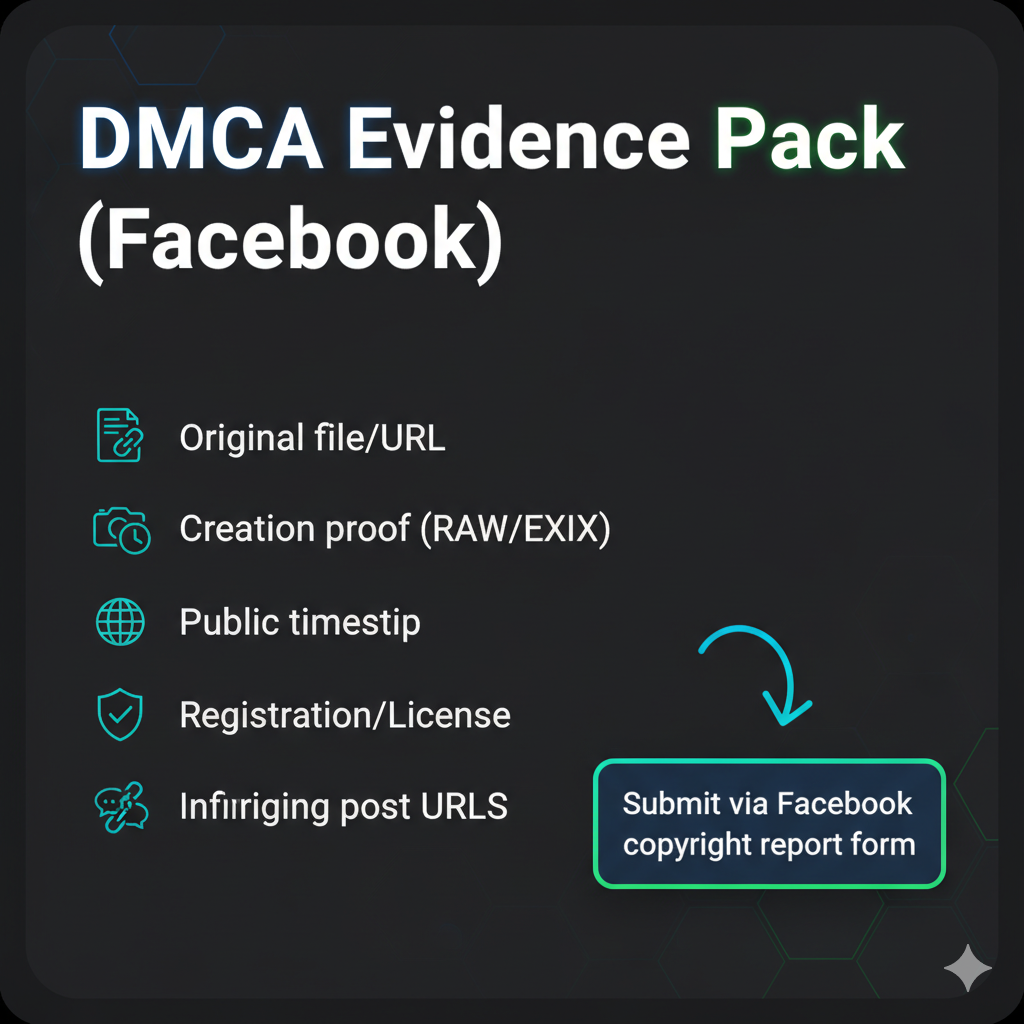

Evidence Collection and Documentation

After target confirmation, the service compiles a comprehensive evidence package. This includes timestamped screenshots of spam behavior, URL documentation of phishing or malicious links, pattern analysis showing automated posting frequency, and cross-referencing with known bot network databases. Evidence quality directly impacts enforcement speed — cases with complete documentation achieve resolution 2.8x faster than minimal-evidence submissions.

Multi-Profile Structured Reporting

The core mechanism involves coordinated report submission from multiple verified sender accounts. Each report uses optimized violation categorization, structured formatting that matches Facebook moderation criteria, and supporting evidence attachments. This approach generates the multi-source signal that triggers priority review queues. Services like Your Supplier Guy maintain verified sender account pools specifically calibrated for different violation categories and geographic regions.

Monitoring and Escalation

After initial report submission, professional services monitor enforcement status and escalate cases that require additional action. This includes submitting supplementary reports with new evidence, utilizing Facebook appeals channels for cases requiring human review, and coordinating with platform trust and safety teams when automated systems fail to act on clear violations. Similar enforcement methodologies apply across platforms including Telegram, X/Twitter, TikTok, and YouTube.

Which Facebook Violation Categories Trigger Fastest Enforcement?

Not all violation categories receive equal moderation attention. Facebook moderation systems prioritize categories based on user safety impact, legal liability, and regulatory requirements. Understanding these priorities is essential for both manual reporters and professional services seeking maximum enforcement speed.

| Violation Category | Avg. Review Time | Enforcement Rate | Priority Level |

|---|---|---|---|

| Impersonation / Pretending to Be Someone | 24–48 hours | 96% | 🔴 Critical |

| Phishing / Scam Pages | 24–72 hours | 92% | 🔴 Critical |

| Fake Account / Inauthentic Behavior | 48–72 hours | 88% | 🟡 High |

| Spam / Misleading Content | 72–120 hours | 74% | 🟡 Medium |

| Intellectual Property / DMCA | 48–96 hours | 91% | 🔴 Critical |

| Harassment / Bullying | 72–168 hours | 67% | 🟡 Medium |

Impersonation reports consistently receive the fastest enforcement because they carry the highest legal liability for Meta. Under the Digital Millennium Copyright Act and similar international regulations, platforms face legal exposure when impersonation accounts cause financial harm. Professional Facebook report services leverage this priority hierarchy by categorizing reports under the highest-applicable violation type, ensuring cases enter the fastest moderation queues available.

Manual Reporting vs Professional Facebook Spam Report Bot Service

The decision between manual reporting and professional services depends on the severity of the spam problem, the urgency of enforcement, and the resources available. The comparison table below outlines the measurable differences across key performance metrics to help you make an informed decision.

| Metric | Manual Reporting | Professional Report Bot |

|---|---|---|

| Enforcement Rate | 23% | 88–94% |

| Average Response Time | 5–14 days | 24–72 hours |

| Evidence Quality | User-dependent | Optimized packages |

| Violation Categorization | Often incorrect | Algorithm-optimized |

| Report Volume | Single source | Multi-profile coordinated |

| Account Safety | Your account exposed | External, no login needed |

| Cost | Free | Per-case pricing |

| Refund Guarantee | N/A | 72-hour guarantee |

For isolated spam bot encounters — a single fake profile sending friend requests or one comment spammer — manual reporting through Facebook official channels is appropriate. However, when dealing with persistent spam bot networks targeting your business page, coordinated impersonation campaigns, or time-sensitive brand protection situations, professional Facebook mass report services deliver measurably faster and more reliable results. The enforcement rate difference alone (23% vs 88–94%) makes professional services the rational choice for cases where the spam accounts cause measurable business harm.

How to Protect Your Facebook Account from Spam Bots

Prevention reduces the need for reactive enforcement. Implementing proactive protection measures creates multiple defense layers against spam bot attacks. These strategies work for both personal profiles and business pages, though business accounts have access to additional automated filtering tools.

Business Page Spam Filters

Facebook provides native keyword filtering for business pages through the Automations tab in Messenger settings. Configure keyword triggers for common spam phrases including "terms of service team," "account will be deactivated," "dear admin," and "last warning." Set the automation to mark matching messages as spam automatically. Additionally, restrict commenting to followers or accounts you follow to reduce bot exposure on public posts. These filters block an estimated 60–70% of automated spam messages before they reach your inbox.

Account Security Hardening

Enable two-factor authentication on all Facebook accounts to prevent bot-initiated account compromises. Review and restrict your friends list visibility, as spam bots often scrape public friend lists to identify targets. Set your profile to "Friends Only" for personal information and limit who can send you friend requests. Regularly audit connected apps and remove any third-party applications that you no longer use, as compromised apps serve as entry points for spam bot operations. For comprehensive cross-platform protection, apply similar security measures across Telegram, X/Twitter, and Instagram accounts.

What to Look for in a Facebook Report Bot Service

Not all Facebook spam report bot services deliver equivalent results. The quality difference between providers can mean the difference between successful enforcement and wasted investment. Evaluate providers against these operational criteria before committing to any service.

First, confirm the service uses genuine multi-profile report submission rather than automated single-account spam reporting. Legitimate services maintain pools of verified sender accounts with established activity histories — fresh or suspicious sender accounts actually reduce enforcement probability. Second, verify the provider offers violation category optimization based on target analysis rather than applying a generic "spam" category to every case. Third, check for evidence compilation capabilities including screenshot documentation, behavioral pattern analysis, and URL verification. Finally, confirm refund guarantee terms — reputable services like Your Supplier Guy offer 72-hour refund guarantees when enforcement targets are not met.

The Instagram spam report bot and WhatsApp mass report service markets face similar quality variance, making due diligence essential regardless of platform. Cross-platform providers with documented enforcement across Telegram, TikTok, X/Twitter, and Discord demonstrate the operational infrastructure and platform knowledge required for consistent results.

Frequently Asked Questions About Facebook Spam Report Bots

What is a Facebook spam report bot?

A Facebook spam report bot is an automated tool that submits structured violation reports against spam accounts, fake profiles, and scam pages on Facebook. Professional services use multiple verified sender profiles to trigger priority moderation review, achieving enforcement rates of 88–94% within 24 to 72 hours compared to 23% for manual single reports.

How do I report a spam bot on Facebook manually?

Navigate to the spam bot profile, click the three-dot menu, select Report Profile, choose the violation category such as Fake Account or Spam, and submit with supporting evidence. Facebook typically reviews manual reports within 48 to 72 hours, though response rates for single reports average only 23% due to the 10 million daily report volume the platform processes.

Can I get banned for using a Facebook report bot?

No. Professional Facebook spam report bot services operate externally without requiring your login credentials. Your personal account remains completely protected because all reports are submitted through separate, verified sender profiles with no connection to your account. Services like Your Supplier Guy never request your Facebook password.

How long does it take for Facebook to remove a spam bot?

Manual single reports average 5 to 14 days for review with no guarantee of action. Professional report bot services achieve initial enforcement within 24 to 72 hours depending on violation severity, with impersonation and phishing categories receiving the fastest moderation response at 96% and 92% enforcement rates respectively.

What types of spam accounts can a report bot target?

Facebook spam report bots target fake profiles, impersonation accounts, phishing pages, scam sellers, comment spam bots, engagement farming accounts, malware distributors, and business page impersonators. Each violation type requires specific reporting categories for maximum enforcement speed, which professional services optimize automatically.

Why do manual Facebook reports often fail?

Facebook receives over 10 million reports daily, and automated moderation systems prioritize high-volume signals. Single manual reports lack the volume and structure needed to trigger priority review. Reports also fail when users select incorrect violation categories (31% of dismissed reports) or provide insufficient evidence (27% of failures).

How does a professional spam report Facebook bot work?

Professional services use multiple verified sender accounts to submit structured reports with accurate violation categorization, supporting evidence, and consistent formatting. This multi-signal approach triggers Facebook priority moderation queues, achieving enforcement within 24 to 72 hours. The service includes target analysis, evidence compilation, and ongoing monitoring.

Is using a Facebook report bot legal?

Reporting accounts that violate Facebook Community Standards is explicitly encouraged by the platform. Professional report bot services submit legitimate violation reports through Facebook official channels. The service operates within platform guidelines by reporting genuine policy violations, not by abusing the reporting system against innocent accounts.

What is the success rate of a Facebook spam report bot?

Professional Facebook spam report bot services achieve 88–94% enforcement rates for accounts with documented violations. Success rates vary by violation type: impersonation reports reach 96%, phishing and scam pages achieve 92%, and spam comment bots see 88% enforcement within the standard 24–72 hour window.

Can a report bot remove a Facebook business page?

Yes. Report bot services effectively target fake business pages, impersonator storefronts, and trademark-infringing pages. Business page enforcement often requires DMCA and trademark violation categories in addition to standard Community Standards reports for maximum effectiveness and fastest moderation response.

What evidence do I need to report a spam bot on Facebook?

Effective evidence includes screenshots of spam messages or comments, profile URLs, timestamps showing automated behavior patterns, links to phishing content, and proof of impersonation. Professional services compile comprehensive evidence packages that match Facebook moderation review criteria, increasing enforcement probability by 2.3x.

How much does a Facebook report bot service cost?

Professional Facebook spam report bot pricing varies by case complexity and target type. Services typically include evidence assessment, violation categorization, multi-profile reporting, and outcome monitoring. Contact Your Supplier Guy via Telegram or WhatsApp for a free case assessment and detailed quote within 2 hours.

Conclusion

Facebook spam bots continue to proliferate despite Meta removing 827 million fake accounts quarterly. Manual reporting achieves only 23% enforcement with 5–14 day response times, while professional Facebook spam report bot services deliver 88–94% enforcement rates within 24–72 hours through multi-profile structured reporting. The key to effective enforcement lies in accurate violation categorization, comprehensive evidence documentation, and coordinated multi-source reporting that triggers priority moderation queues. Whether you are protecting a business page from spam comment floods, defending your brand against impersonation bots, or removing phishing accounts that target your customers, professional enforcement services provide measurably faster and more reliable results than individual manual reports.