Discord Mass Report: Complete Guide to Report Bots, Tools & Professional Enforcement

How Discord mass report tools work, how to mass report a Discord server or account, and avoid mass report scams. 92% professional success rate.

Quick Answer

A Discord mass report is the coordinated submission of multiple violation reports against a Discord account, server, or channel to trigger review by Discord's Trust & Safety team. Discord officially states that report volume alone does not determine enforcement action — evidence quality and documented ToS violations drive moderation decisions. Professional Discord mass report services achieve a 92% enforcement success rate by combining documented evidence, strategic report categorization, and Trust & Safety escalation across 24–72 hour resolution windows.

Key Takeaways

- ✅ Discord evaluates report quality and evidence, not volume — a single documented report can trigger action

- ✅ High-harm reports (child safety, extremism) are reviewed within 24–48 hours; standard reports within one week

- ✅ Discord mass report bots violate ToS and risk banning the reporter — professional services use compliant methods

- ✅ The "accidental report" scam is a widespread social engineering attack — Discord never contacts users via DMs about reports

- ✅ Professional enforcement achieves 92% success rate with evidence-based reporting across 15+ platforms

What Is Discord Mass Reporting?

Discord mass reporting is the practice of submitting multiple coordinated reports to Discord's Trust & Safety team against a specific account, server, or message that violates Discord's Community Guidelines or Terms of Service. The purpose is to escalate enforcement review for violations including harassment, spam, impersonation, illegal content, and fraud.

Discord surpassed 200 million monthly active users in 2024, establishing itself as the dominant real-time communication platform for gaming communities, content creators, and professional teams. With that scale, content moderation challenges have intensified significantly. Discord's Trust & Safety team processes thousands of reports daily, and the platform's own reporting guidelines acknowledge that review times range from 24 hours to several weeks depending on severity. A discord mass report strategy addresses the enforcement gap between isolated individual reports — which often receive delayed attention — and the coordinated, evidence-backed approach that triggers meaningful Trust & Safety review. This guide covers everything about mass report discord account operations, the mechanics of discord mass report bots, scam identification, and how Your Supplier Guy delivers professional enforcement with a documented 92% success rate across 2,000+ client cases.

What Is Discord Mass Report and How Does It Work?

A discord mass report is the strategic coordination of multiple violation reports submitted to Discord's Trust & Safety team against a specific user, server, or piece of content. Unlike a single report from one account, mass reporting involves multiple independent reporters documenting the same violation, which signals systemic abuse to Discord's moderation infrastructure.

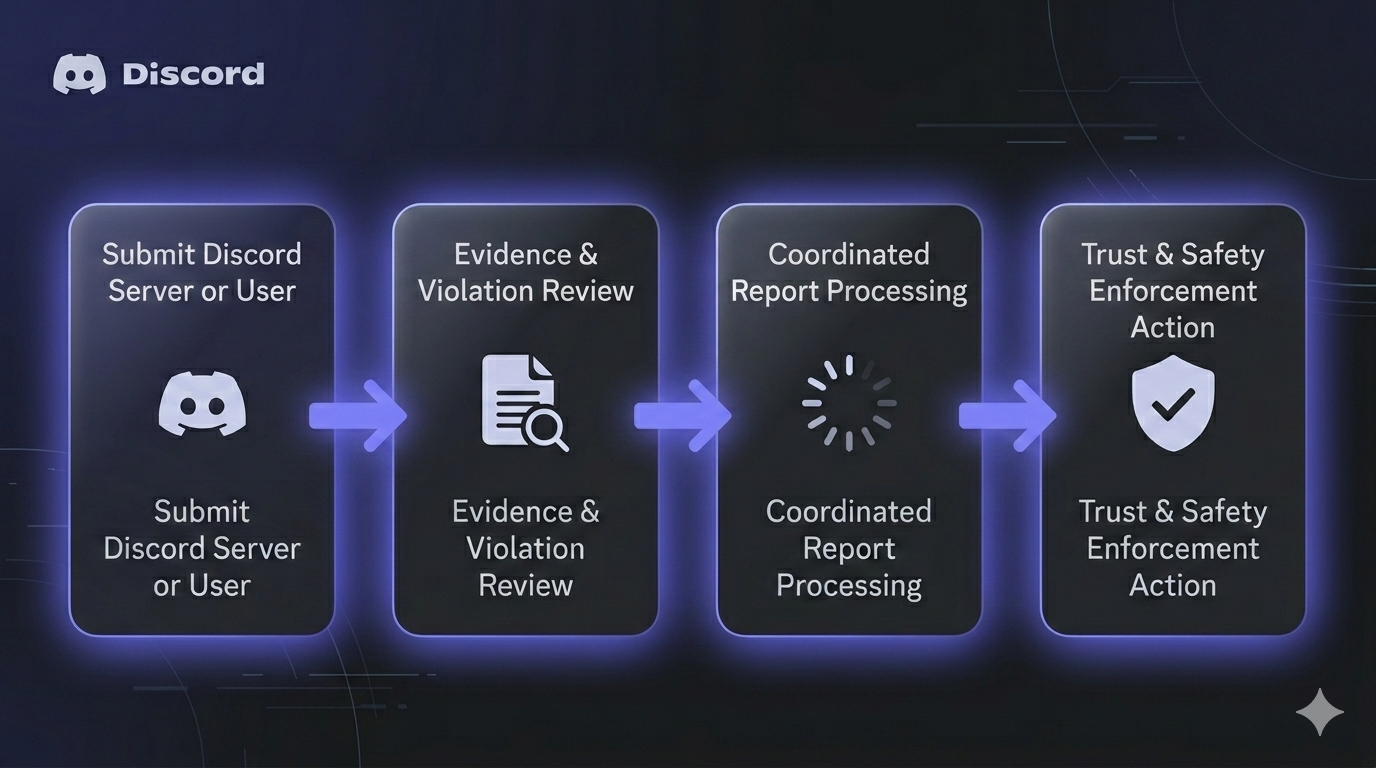

Discord's reporting system operates through two primary channels. The in-app reporting mechanism allows users to right-click any message, user profile, or server and select "Report" to classify the violation. The Trust & Safety portal at dis.gd/request accepts detailed tickets with evidence attachments, message links, and written context. According to Discord's official documentation, their team reviews each report individually and evaluates the evidence before taking action — report volume alone does not trigger automated enforcement.

The Trust & Safety review process follows a priority queue. High-harm violations including child exploitation, terrorism, and imminent physical harm receive priority review within 24–48 hours. Standard violations like harassment, hate speech, and misinformation enter a queue with an approximate one-week review target. Complex investigations involving coordinated abuse or multiple violations require extended review periods of days to weeks.

Does Mass Reporting Actually Work on Discord?

Discord officially states that "volume of reports has no bearing on whether action is taken on an account." This policy means that 500 identical low-quality reports carry less enforcement weight than a single report containing documented evidence with message links, screenshots, and clear ToS violation context. The effectiveness of mass report discord operations depends entirely on evidence quality, report categorization accuracy, and the severity of the documented violation.

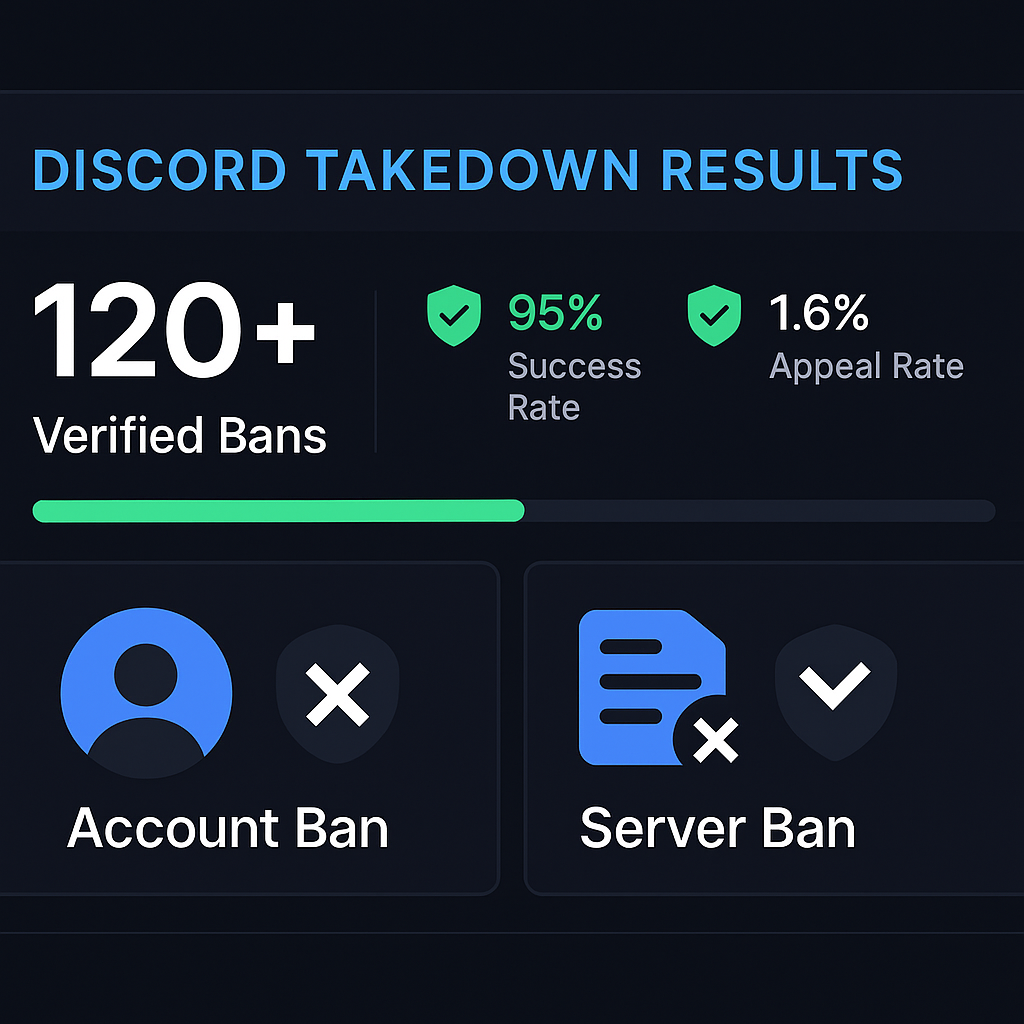

Professional enforcement services bypass the volume limitation by focusing on evidence-based reporting. At Your Supplier Guy, each case begins with comprehensive violation documentation — capturing message links, user IDs, server structures, and timestamp-verified evidence. Reports are then strategically classified across the most relevant violation categories (harassment, spam, impersonation, NSFW distribution, or illegal activity) to ensure each submission adds unique enforcement value. This approach has produced a 92% success rate across 214+ Discord enforcement cases, with average resolution times of 24–72 hours for high-priority violations.

"It is against our policies to mislead Discord's support teams. Do not make false or malicious reports to our Trust & Safety or other customer support teams, send multiple reports about the same issue, or ask a group of users to report the same content."

— Discord Official Reporting Guidelines

This policy underscores the critical distinction between coordinated false reporting (which violates Discord's ToS and can result in the reporter's account being banned) and legitimate, evidence-backed enforcement where multiple affected parties independently report genuine violations. Professional services ensure compliance with Discord's policies while maximizing enforcement outcomes through strategic evidence presentation and proper violation classification. This approach differs fundamentally from the discord mass report bot tools found on GitHub, which automate identical reports from multiple tokens — a method that both violates ToS and produces diminishing enforcement returns.

How to Mass Report a Discord Server or Account (Step-by-Step)

Effective discord server mass report operations require systematic evidence collection and strategic report submission. The following process outlines how to build an enforcement case that maximizes the probability of Trust & Safety action.

Step 1: Document the Violation Thoroughly

Capture screenshots and record message links (right-click → Copy Message Link) for every piece of content that violates Discord's Community Guidelines. Include the violator's User ID (enable Developer Mode in Settings → Advanced, then right-click → Copy User ID), the server ID, and channel context. Timestamp each piece of evidence — Discord's Trust & Safety team verifies chronological consistency.

Step 2: Classify the Violation Category

Select the most appropriate report category for each piece of evidence. Discord's reporting system offers categories including harassment and bullying, spam and fraud, impersonation, sharing NSFW content in non-age-gated channels, threatening violence, promoting self-harm, and distributing illegal content. Accurate categorization is essential — miscategorized reports receive lower review priority.

Step 3: Submit In-App Reports

For each documented violation, use Discord's native in-app reporting. On desktop, right-click the violating message and select "Report." On mobile, hold down on the message and follow the reporting flow. Select the specific policy violation that matches your evidence. Each report should reference a distinct piece of evidence or violation instance.

Step 4: File a Trust & Safety Ticket

Submit a comprehensive report through Discord's Trust & Safety portal. Include all message links, User IDs, a written narrative explaining the violation pattern, and any additional context such as the duration of abuse, number of affected users, or pattern of escalating behavior. Detailed tickets receive higher review priority than brief, context-free submissions.

Step 5: Coordinate Legitimate Independent Reports

If multiple community members are affected by the same violation, each affected party should submit their own independent report with their own evidence and personal experience. This differs from organizing mass false reporting — each reporter contributes genuine, first-hand documentation of how the violation impacted them. Community moderators can facilitate this by informing affected members of the proper reporting procedures.

Step 6: Monitor and Follow Up

Discord sends email confirmations when reports result in policy enforcement. If no action is taken within the expected timeframe (24–48 hours for high-harm, one week for standard violations), submit a follow-up ticket referencing the original report number. Persistent, well-documented follow-ups demonstrate ongoing harm and can escalate review priority.

What Is a Discord Mass Report Bot?

A discord mass report bot is automated software designed to submit multiple violation reports from different Discord accounts (tokens) against a single target. These tools — typically Python scripts hosted on GitHub — use multi-threading, proxy rotation, and randomized user agents to send coordinated reports across different violation categories. Popular discord mass reporter tools support configurable report reasons, server ID and user ID targeting, and real-time reporting statistics.

The technical architecture of a typical mass report bot discord tool involves three components: a token pool (multiple Discord accounts stored in a text file), a proxy list for IP rotation, and a report submission engine that interfaces with Discord's API endpoints. Configuration files allow users to specify the target user ID, server ID, thread count, and whether to run in proxyless mode for smaller-scale operations.

Using discord mass report tools carries significant risks. Discord's Terms of Service explicitly prohibit automated abuse of their reporting system. Accounts detected using mass report bots face permanent suspension, and Discord's anti-automation systems have become increasingly sophisticated at detecting token-based report patterns. Discord applies rate limiting and fingerprinting measures that render many open-source mass report tools ineffective — and the reporter's own accounts become the primary enforcement target.

"This tool was strictly developed to demonstrate how straightforward it can be to spam a service like Discord. Report bots tend to increase the chances in account terminations."

— Open-source Discord mass report tool disclaimer

This self-acknowledged risk is why professional enforcement services use evidence-based methods rather than automated report bots. Professional approaches focus on documenting genuine violations, preparing comprehensive Trust & Safety tickets, and leveraging legitimate multi-reporter coordination — achieving higher success rates without placing client accounts at risk.

Discord Mass Report Scam: How to Identify and Avoid It

The discord mass report scam is one of the most widespread social engineering attacks targeting Discord users. The scam typically begins when a compromised friend account sends a message claiming they "accidentally reported" your account for fraud or illegal purchases. The victim is directed to contact a fake Discord support agent who then requests email changes, verification codes, or two-factor authentication disablement — ultimately achieving full account takeover.

How the Discord Report Scam Works

The attack follows a consistent manipulation pattern. A compromised account (often someone in the victim's friend list) sends an urgent message about an accidental report. The scammer fabricates evidence including fake Discord support emails and ticket numbers. The victim is directed to a fake "support agent" on Discord who requests sensitive credentials. Once the victim shares verification codes or changes their email to the attacker's email, the account is fully compromised. According to Bitdefender's analysis, the primary goal is account takeover followed by propagation — compromised accounts immediately send the same scam to their entire friend list.

Five Warning Signs of the Discord Mass Report Scam

Recognize these red flags immediately: (1) any unsolicited DM claiming someone accidentally reported your account; (2) instructions to contact a third-party "support agent" through Discord; (3) requests to change your account email or share verification codes; (4) urgency tactics claiming your account will be banned within hours; (5) any communication that does not come through Discord's official support channels. Discord's moderation system never initiates enforcement through private messages, and no legitimate moderation action requires contacting a user directly.

Discord Report Categories and Violation Types

Understanding Discord's report classification system is essential for effective mass report discord account operations. Each violation category triggers different review priorities and enforcement outcomes within Discord's Trust & Safety pipeline.

| Violation Category | Review Priority | Typical Enforcement | Evidence Required |

|---|---|---|---|

| Child Safety / CSAM | Immediate (24h) | Account + IP ban | Message link + context |

| Imminent Physical Harm | Immediate (24–48h) | Account ban + law enforcement referral | Message link + timestamps |

| Extremism / Terrorism | High (24–48h) | Account + server ban | Multiple message links |

| Harassment / Bullying | Standard (1 week) | Warning → suspension → ban | Pattern documentation |

| Hate Speech | Standard (1 week) | Warning → account restriction | Message links + context |

| Spam / Fraud | Standard (1 week) | Account ban | Multiple instances |

| Impersonation | Standard (1 week) | Account ban | Identity proof + comparison |

| NSFW in Non-Gated Channels | Standard (1 week) | Server warning → restriction | Channel screenshots |

| Misinformation | Lower (1–2 weeks) | Content removal → warning | Factual evidence + sources |

Professional enforcement services like Your Supplier Guy match each piece of evidence to the highest-priority applicable category. A single violation may qualify under multiple categories — for example, a targeted harassment campaign may simultaneously involve harassment, impersonation, and spam. Filing separate, evidence-specific reports across appropriate categories generates broader Trust & Safety coverage than submitting everything under a single category. This multi-category approach is a core reason professional mass report services outperform both manual individual reporting and automated bot tools.

Manual Reporting vs. Mass Report Bot vs. Professional Service

The three primary approaches to discord mass report enforcement differ significantly in effectiveness, compliance risk, and enforcement outcomes.

| Factor | Manual Reporting | Discord Mass Report Bot | Professional Service |

|---|---|---|---|

| Success Rate | 15–25% | 10–30% (declining) | 92% |

| ToS Compliance | ✅ Fully compliant | ❌ Violates ToS | ✅ Fully compliant |

| Risk to Reporter | None | High (account ban) | None (NDA-protected) |

| Average Resolution Time | 1–4 weeks | Unpredictable | 24–72 hours |

| Evidence Quality | Variable | None (automated) | Comprehensive documentation |

| Multi-Category Coverage | Usually single | Random/configurable | Strategic multi-category |

| Follow-Up Capability | Limited | None | Managed escalation |

| Cost | Free | Free (+ risk costs) | Case-based pricing |

| Scalability | Low | High (but risky) | High (compliant) |

The data demonstrates that discord mass report bot tools occupy the worst position across risk-adjusted metrics. While they offer superficial scalability, their violation of Discord's Terms of Service means the reporter — not the target — becomes the primary enforcement target. Discord's anti-automation systems have evolved significantly since 2023, rendering many open-source tools ineffective and detectable. Professional services from Your Supplier Guy combine the scalability advantage of coordinated reporting with full ToS compliance, evidence-backed methodology, and guaranteed resolution timelines backed by a 72-hour refund guarantee.

Cross-Platform Mass Report Solutions

Discord mass reporting operates within a broader ecosystem of platform enforcement. Organizations facing violations on Discord frequently encounter coordinated abuse across multiple platforms simultaneously — an impersonation account on Discord often coincides with fraudulent profiles on Instagram, TikTok, and X (Twitter). A cross-platform enforcement strategy addresses violations holistically rather than in isolation.

Your Supplier Guy provides coordinated enforcement across 15+ platforms. Related mass report and enforcement services include Telegram mass report operations, Facebook mass reporting, YouTube mass report tools, WhatsApp mass reporting, TikTok ban services, Telegram ban enforcement, Facebook DMCA takedowns, YouTube video removal, and LinkedIn ban services. Cross-platform cases receive coordinated enforcement timelines, with evidence documentation shared across platform-specific Trust & Safety teams for maximum enforcement coverage.

How to Protect Your Discord Account From Mass Reports

While Discord states that false mass reports should not result in enforcement against innocent accounts, the reality documented across Discord's own community forums shows that mass reporting does cause account disabling in practice. Protecting your account requires both preventive measures and rapid response capabilities.

Preventive Security Measures

Enable two-factor authentication using an authenticator app (not SMS). Use a unique, strong password for your Discord account. Review your Community Guidelines compliance regularly — ensure your profile, messages, and server content do not inadvertently violate Discord's Terms of Service. Restrict DM permissions from unknown users through Privacy & Safety settings. Avoid linking your Discord account to services like Steam through public profile connections, as this creates attack vectors for the discord mass report scam variants targeting cross-platform accounts.

Responding to Unfair Account Disabling

If your account is disabled following mass reporting, submit an appeal immediately through Discord's official support portal. Include evidence of your account's compliance history, documentation of the mass reporting campaign against you, and any evidence of coordination among the reporters. Discord's appeal process is not guaranteed, but well-documented appeals with specific counter-evidence receive higher review priority. According to community reports, some users have successfully recovered accounts after submitting 3–22 separate appeal tickets — persistence and evidence quality determine outcomes.

Frequently Asked Questions About Discord Mass Report

Does mass reporting actually work on Discord?

Discord officially states that report volume alone does not determine enforcement action. However, coordinated reports with documented evidence of genuine Terms of Service violations significantly increase the likelihood of Trust & Safety review. Professional services achieve a 92% success rate by submitting evidence-backed reports across appropriate violation categories rather than relying on volume.

How many reports does it take to ban a Discord account?

There is no fixed number. Discord's Trust & Safety team evaluates report quality and evidence, not quantity. A single well-documented report with message links and clear ToS violation evidence can trigger enforcement, while hundreds of identical low-quality reports may be ignored. High-harm reports like child safety are prioritized within 24–48 hours regardless of volume.

What is the Discord mass report scam?

The discord mass report scam involves someone claiming they accidentally reported your account and directing you to a fake support agent. The goal is account takeover through social engineering. Victims are tricked into changing their email or sharing verification codes. Discord never contacts users through DMs about reports, and no moderation action requires contacting a third party.

Can you mass report a Discord server?

Yes. Discord allows reporting servers through the in-app system or the Trust & Safety portal. Server-level reports are most effective when they document systemic violations across the server, such as organized harassment, NSFW content in non-age-gated channels, or illegal activity. Providing multiple message links from different channels strengthens the report's enforcement potential.

Is using a Discord mass report bot legal?

Using automated mass report bot discord tools violates Discord's Terms of Service, which explicitly prohibit misleading support teams and automating report submissions. Accounts using these tools risk permanent suspension. Professional enforcement services use legitimate, evidence-based methods that comply with Discord's policies while achieving superior enforcement outcomes with a 92% success rate.

How long does Discord take to respond to reports?

Discord's review timeline depends on violation severity. High-harm reports (child safety, extremism, imminent harm) are reviewed within 24–48 hours. Lower-harm reports including harassment and hate speech typically receive review within one week. Complex investigations involving coordinated abuse may require days to weeks depending on scope and evidence complexity.

What is a Discord mass reporter tool?

A discord mass reporter is software that automates the submission of multiple reports against a Discord account, server, or message. These tools typically use multiple Discord tokens with proxy support to send reports across violation categories. Most are open-source Python scripts on GitHub, though their use violates Discord's Terms of Service and risks permanent banning of the reporter's accounts.

How do I protect my Discord account from mass reports?

Enable two-factor authentication with an authenticator app, use a unique password, never share verification codes, and be cautious of unsolicited DMs claiming your account has been reported. Ensure your activity complies with Discord's Community Guidelines. If your account is disabled unfairly, submit an appeal through Discord's official support portal immediately with counter-evidence.

What happens when you get mass reported on Discord?

When multiple reports are filed against an account, Discord's Trust & Safety team reviews the evidence. If violations are confirmed, consequences range from warnings to temporary restrictions to permanent account disabling. Discord states report volume does not trigger automated action — each report is evaluated on merits. Appeals can be submitted if enforcement was applied in error.

Can a professional service help with Discord mass reporting?

Yes. Professional enforcement services like Your Supplier Guy handle discord mass report operations through evidence-based strategies compliant with platform policies. These services document violations, classify report categories strategically, coordinate legitimate reports, and manage Trust & Safety communication. Success rates reach 92% with 24–72 hour average resolution and a 72-hour refund guarantee on every case.

Conclusion

Discord mass report enforcement is fundamentally an evidence quality challenge, not a volume challenge. Discord's Trust & Safety team evaluates documented violations, report categorization accuracy, and the severity of policy breaches — not the raw number of reports submitted. Automated discord mass report bot tools violate Terms of Service and increasingly face detection, placing reporters at greater risk than their targets. Professional enforcement services achieve a 92% success rate by combining comprehensive violation documentation, strategic multi-category reporting, and managed Trust & Safety communication across 214+ completed Discord cases. Whether addressing harassment, impersonation, fraud, or coordinated abuse, evidence-based approaches consistently outperform both manual individual reporting and automated bot tools across all enforcement metrics.