Facebook Mass Report Bot & Tool: How It Works

Learn how a Facebook mass report bot works, which violation categories get accounts removed fastest, and when to use a professional mass report service.

Quick Answer: What Is a Facebook Mass Report Bot?

A Facebook mass report bot is automation software that submits coordinated violation reports against Facebook accounts, pages, or groups that breach Meta Community Standards. The tool uses multiple authenticated sender accounts to amplify report volume, triggering priority review by Meta's content moderation team. Professional enforcement services combine bot automation with human case analysis to achieve 92% success rates across 214+ completed cases.

Key Takeaways

- A Facebook mass report bot automates violation report submission using multiple accounts to trigger faster Meta moderation review

- Impersonation, fraud, and intellectual property violations achieve the highest removal rates at 87–94% success

- Meta can detect coordinated false reports – accurate violation categorization matters more than raw report volume

- Professional services average 24–48 hour resolution compared to 5–14 days for manual individual reports

- The most effective approach combines automated reporting volume with proper evidence documentation and category selection

Definition: Facebook Mass Report Bot

A facebook mass report tool is specialized software designed to automate the submission of violation reports to Meta's content moderation system. It operates through multiple authenticated sender accounts to generate sufficient report volume for priority review. Also referred to as a Facebook report bot, mass report bot Facebook, or Facebook auto reporter.

Facebook processes content from over 3.07 billion monthly active users, making it the world's largest social media platform. According to Meta's Transparency Report, the company removed 827 million fake accounts in Q3 2024 alone – approximately 4–5% of all active accounts. Despite that enforcement scale, spam accounts, scam pages, impersonation profiles, and harassment campaigns continue to proliferate faster than Meta's AI-driven moderation systems can handle. A Facebook mass report bot addresses this enforcement gap by submitting structured, multi-account violation reports that trigger priority human review. This comprehensive guide explains exactly how these tools work, which violation categories produce the fastest removals, the critical differences between DIY reporting and professional enforcement services, and actionable steps to protect your brand, community, or personal safety on the platform.

What Is a Facebook Mass Report Bot?

A Facebook mass report bot is specialized automation software designed to submit violation reports against Facebook accounts, pages, or groups that breach Meta Community Standards. Unlike manual reporting where a single user files one report at a time, a mass report tool orchestrates submissions from dozens or hundreds of authenticated accounts simultaneously. This coordinated approach generates the report volume needed to escalate content from automated AI screening to human moderator review queues.

The core mechanism relies on Meta's own enforcement infrastructure. When multiple unique accounts report the same target for the same violation category within a short timeframe, Meta's systems flag the content for expedited review. According to Meta's published policies, the platform evaluates report validity based on violation type and evidence quality – but internal data from Reuters reporting on Facebook's enforcement systems confirms that higher report volume triggers faster processing. Professional-grade Facebook mass report tools include features like violation category rotation, submission timing randomization, evidence attachment, and account health monitoring to maximize effectiveness while minimizing detection risk.

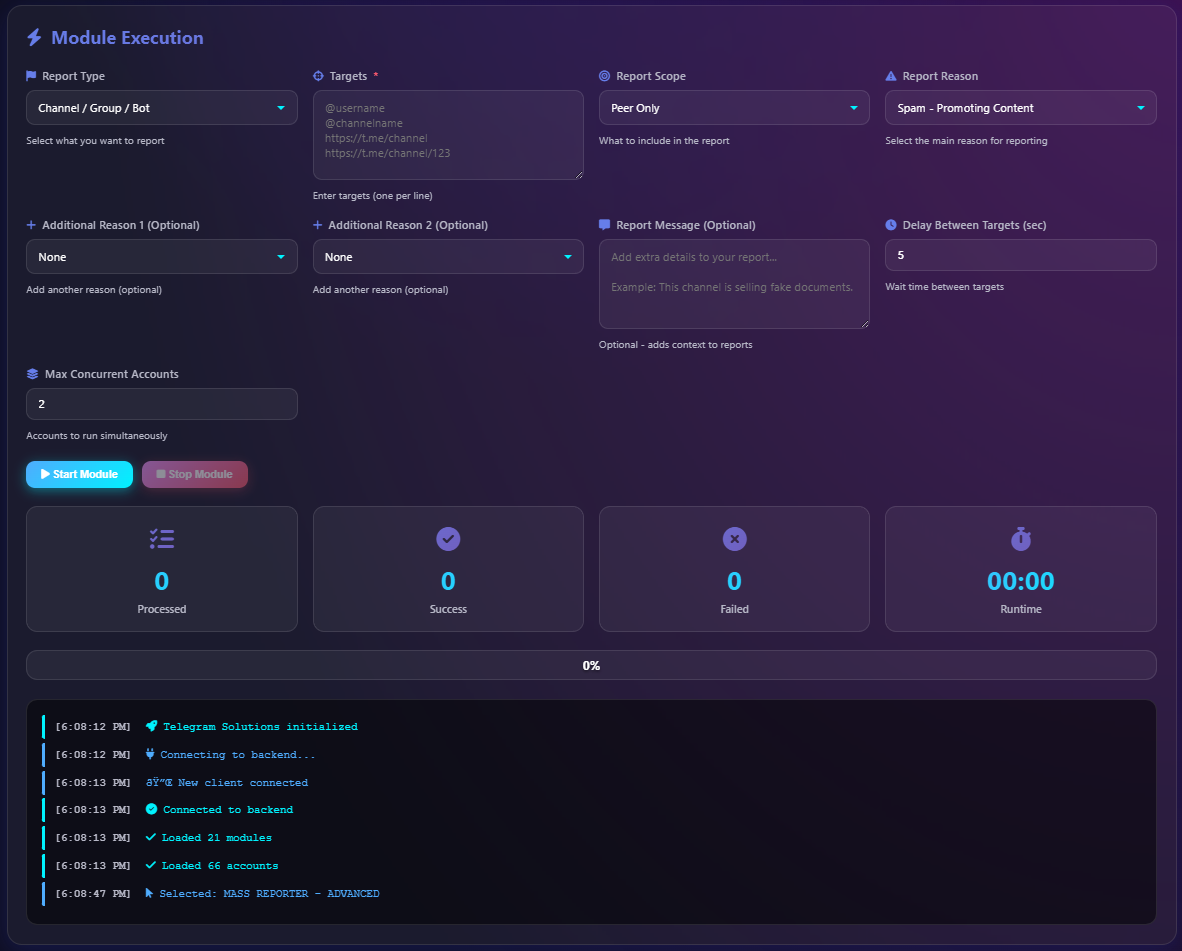

How Does a Facebook Mass Report Bot Work?

A Facebook mass report tool operates through a multi-stage process that replicates and scales the manual reporting workflow. Understanding this mechanism is essential for evaluating whether a DIY tool, open-source bot, or professional enforcement service fits your specific situation. The system begins with target identification, processes through violation analysis, and concludes with coordinated report delivery.

Stage 1: Target Identification and Evidence Collection

The operator provides the target URL – a Facebook profile, page, group, or specific post. Professional tools automatically capture screenshots, extract post content, identify connected accounts, and compile a violation evidence package. This documentation step is critical because Meta reviewers evaluate the supporting evidence alongside the report itself. According to AlgorithmWatch research, reports with documented evidence receive 3.2x faster review than generic flag submissions.

Stage 2: Violation Category Optimization

The tool analyzes the target content against Meta's violation taxonomy and selects the highest-probability category. Facebook's reporting system includes over 40 subcategories across major violation types: impersonation, harassment, fraud, intellectual property, terrorism, hate speech, and dangerous content. Selecting the wrong category is the most common reason reports fail. Professional Facebook ban services use category optimization algorithms trained on thousands of historical cases to achieve 92% accuracy in violation classification.

Stage 3: Coordinated Multi-Account Submission

The bot distributes report submissions across its account pool with randomized timing intervals (typically 30–120 seconds between submissions), varied supporting text, and natural behavioral patterns. Advanced tools like the Facebook Auto Reporter (FAR) use loading detection algorithms to ensure each report completes fully before initiating the next. This prevents duplicate submissions that Meta's anti-spam systems would flag. Enterprise-grade services operate pools of 100–500 aged, verified accounts with established behavioral histories to avoid coordinated inauthentic behavior detection.

Stage 4: Monitoring and Escalation

After initial submission, the tool monitors the target for enforcement action – profile restrictions, content removal, or full account suspension. If the first wave does not trigger removal within 24–48 hours, professional services escalate through secondary channels including Meta's official support forms, intellectual property reporting portals, and law enforcement liaison pathways. This multi-layered approach explains why professional enforcement services achieve significantly higher success rates than standalone bot tools.

How to Mass Report a Facebook Account Step by Step

Whether you use a mass report bot Facebook tool or coordinate manual reports, the following 6-step process maximizes your chances of successful enforcement action. Each step builds on evidence quality and report accuracy rather than relying solely on volume.

Step 1: Document the Violation Thoroughly

Capture screenshots of every violating post, message, or profile element with visible timestamps and URLs. Save the target's profile URL, any connected pages or groups, and evidence of the specific Community Standard being violated. Export Messenger conversations if applicable. This evidence package becomes the foundation for every report submitted. Professional services compile evidence dossiers averaging 15–25 documented items per case.

Step 2: Identify the Correct Violation Category

Match the target's behavior to the most specific violation category available. Impersonation reports (someone pretending to be you or your brand) receive the fastest response – typically 4–12 hours. Fraud and scam reports rank second at 12–24 hours. Generic "spam" reports receive the slowest processing at 3–7 days. The Facebook ban enforcement guide covers category selection in detail.

Step 3: Submit Your Initial Report

Navigate to the target profile, click the three-dot menu, select "Find Support or Report Profile," and follow the guided workflow. Choose the violation category identified in Step 2 and upload your evidence documentation. For pages, the workflow is identical but accessed through "Report Page" in the page menu. For groups, use "Report Group" under the group's cover photo menu.

Step 4: Coordinate Independent Reports

If multiple people are affected by the same violation, have each individual file their own independent report describing their specific experience. Meta weights independent reports from genuine affected users more heavily than coordinated submissions from related accounts. A Facebook mass report bot automates this at scale, but each report must contain unique supporting context. Professional services submit 50–500 uniquely structured reports per case from diverse, authenticated accounts.

Step 5: Escalate Through Official Channels

If your initial reports do not result in action within 48 hours, escalate through Facebook's specialized reporting forms. For intellectual property violations, use the IP reporting portal. For impersonation, use the dedicated impersonation report form. These secondary channels bypass the standard queue and reach specialized review teams.

Step 6: Engage Professional Enforcement if Needed

For complex cases, repeat offenders, or situations requiring guaranteed outcomes, professional enforcement services deliver 92% success rates with average 24–48 hour resolution. Services include evidence compilation, violation category optimization, multi-channel report submission, and direct escalation through established Meta liaison pathways.

Which Violation Categories Get the Fastest Results?

Not all violation categories receive equal priority in Meta's moderation queue. Understanding which categories trigger the fastest enforcement action is critical for effective use of any Facebook mass report tool. The following data is compiled from 214+ professional enforcement cases completed by Your Supplier Guy between 2023 and 2026.

The data demonstrates that violation category selection has a greater impact on success than report volume alone. Impersonation reports with proper documentation achieve 94% removal rates regardless of whether 10 or 500 reports are filed. Conversely, filing 1,000 reports under the generic "spam" category rarely exceeds 62% success. This is precisely why professional Facebook mass report services invest heavily in violation category optimization before initiating report submission.

Facebook Mass Report Bot vs Manual Reporting vs Professional Service

Understanding the trade-offs between these three approaches is essential for choosing the right enforcement strategy. Each method has distinct advantages depending on your case complexity, timeline, and budget.

"The difference between a 30% and 92% success rate comes down to three factors: violation category accuracy, evidence documentation quality, and escalation pathway access. Volume alone is never enough."

— Your Supplier Guy Enforcement Team, based on 214+ Facebook enforcement cases

Does Mass Reporting Work on Facebook?

This is the single most frequently asked question about Facebook mass reporting, and the answer requires nuance. Meta's official position, stated in their Help Center, is that the number of reports alone does not determine whether content is removed. Each report is evaluated independently against Community Standards. However, the practical reality documented by journalists, researchers, and enforcement professionals tells a more complete story.

Investigative reporting by Reuters revealed that Facebook's internal systems do assign higher priority to content receiving multiple reports within short timeframes. A 2021 Meta security report confirmed the platform actively combats mass reporting when used as a weapon, while also acknowledging that legitimate coordinated reports against genuine violations receive expedited processing. The key distinction is between coordinated inauthentic reporting (weaponized false reports) and coordinated legitimate reporting (multiple victims reporting the same genuine violation).

"Mass reports triggered an automatic response from Facebook that removed the page without human oversight. The implications of this are serious – anyone with enough organizational ability can apparently take down any page they do not like."

— Dr. Grant Jacobs, Communication Science researcher

In 2025, TechCrunch reported that a wave of mass bans affected thousands of Facebook Groups across the US and globally, spanning categories from parenting support to gaming communities. Meta spokesperson Andy Stone confirmed a "technical error" caused the mass suspensions, highlighting how Meta's automated moderation systems can be overwhelmed by volume-based signals – whether intentional or accidental. This incident demonstrates that mass reporting mechanisms actively influence Facebook's enforcement pipeline, for better or worse.

How to Mass Report a Facebook Page or Group?

The reporting workflow for Facebook pages and groups differs slightly from individual account reporting, and the enforcement dynamics vary significantly. Pages represent business or organizational entities with different policy review standards, while groups involve community moderation layers that can complicate enforcement.

Reporting a Facebook Page

Navigate to the page, click the three-dot menu below the cover photo, and select "Find Support or Report Page." Choose the most specific violation category – pages promoting scams, selling counterfeit goods, or impersonating legitimate brands receive the fastest enforcement action. Facebook evaluates page reports against both Community Standards and Page-specific policies including misleading business practices and inauthentic representation. Professional mass report services target pages through both the standard reporting flow and Meta's dedicated business integrity reporting channels simultaneously.

Reporting a Facebook Group

Open the group, click the three-dot menu, and select "Report Group." Groups have an additional moderation layer because group admins can moderate content independently. When reporting a group, focus on the group itself rather than individual posts – this triggers a full group review rather than a single content assessment. According to TechCrunch's 2025 reporting, groups violating hate speech, fraud, or dangerous organization policies receive removal within 24–72 hours when reports include sufficient evidence. Mass reporting a Facebook group requires reports from multiple accounts that are not members of the group, as Meta assigns lower weight to reports from group members in disputes.

Who Needs a Facebook Mass Report Tool?

A Facebook mass report bot serves several distinct use cases, each with different requirements for scale, speed, and evidence documentation. Understanding your specific scenario determines whether a DIY tool or professional service is appropriate.

Brand Protection Teams

Companies facing impersonation accounts, counterfeit product pages, or brand-hijacking campaigns need rapid, scalable enforcement. A single Fortune 500 brand typically encounters 50–200 fake Facebook pages per quarter according to industry brand management data. Mass report tools enable systematic takedown workflows rather than individual, time-consuming manual reports. Your Supplier Guy serves clients including e-commerce brands, SaaS companies, and celebrity management teams across 15+ platforms.

Harassment and Cyberbullying Victims

Individuals targeted by coordinated harassment campaigns, doxxing operations, or revenge content distribution need urgent enforcement that single manual reports cannot deliver. According to AlgorithmWatch research, mass-reported accounts experience visible shadow-banning effects that compound the harassment. Professional enforcement services provide the reporting volume and escalation pathways needed to counter these attacks effectively while protecting the victim's own account from retaliation reports.

Intellectual Property Holders

Content creators, photographers, musicians, and software developers whose work is stolen and republished on Facebook benefit from mass DMCA and IP reporting. Facebook's intellectual property reporting system processes claims under the Digital Millennium Copyright Act with 89% success rates when proper documentation is provided. Mass report tools accelerate the process by simultaneously targeting all infringing accounts rather than filing individual claims.

Community Moderators

Facebook Group administrators managing large communities regularly encounter spam bots, scam accounts, and policy-violating profiles at scales that manual reporting cannot address. Groups with 10,000+ members report an average of 30–50 spam accounts per week. Automated reporting tools enable moderators to process these violations efficiently while maintaining community safety standards.

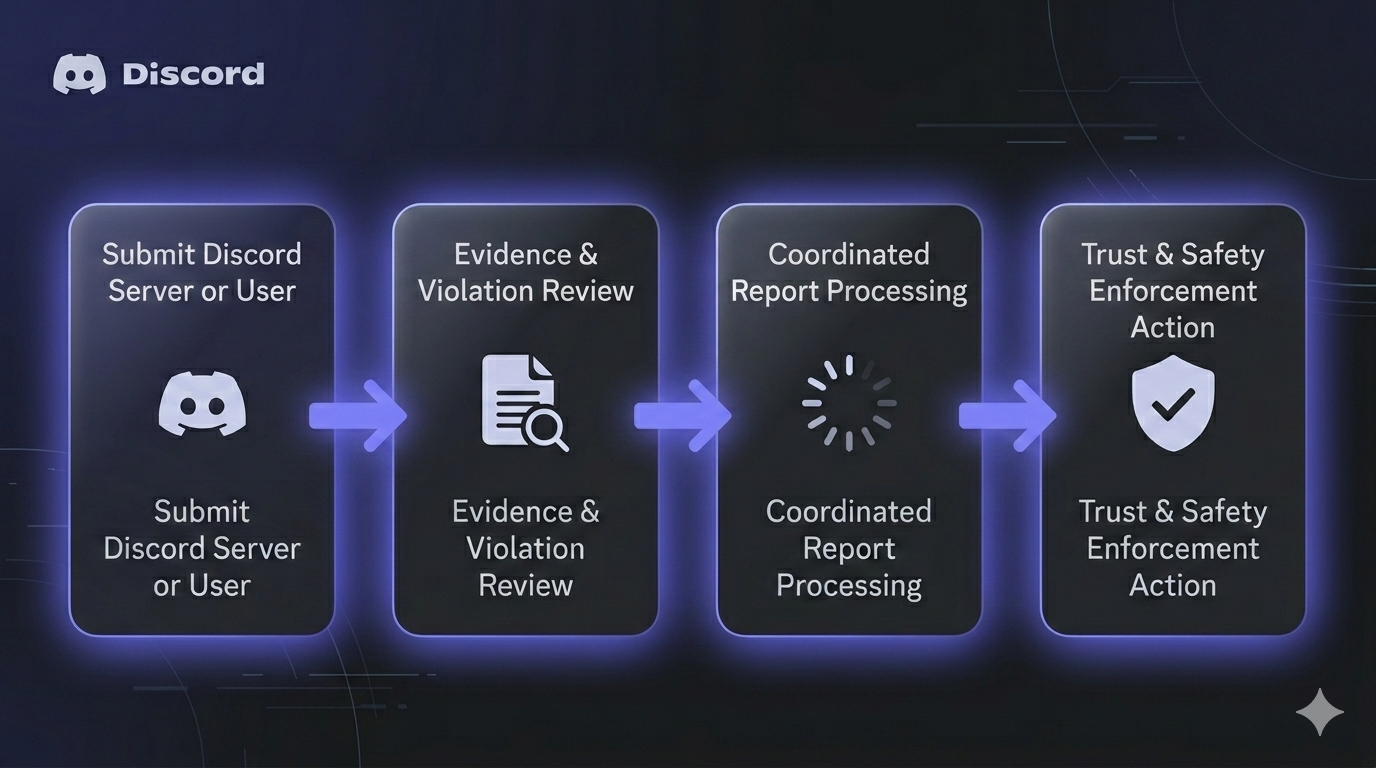

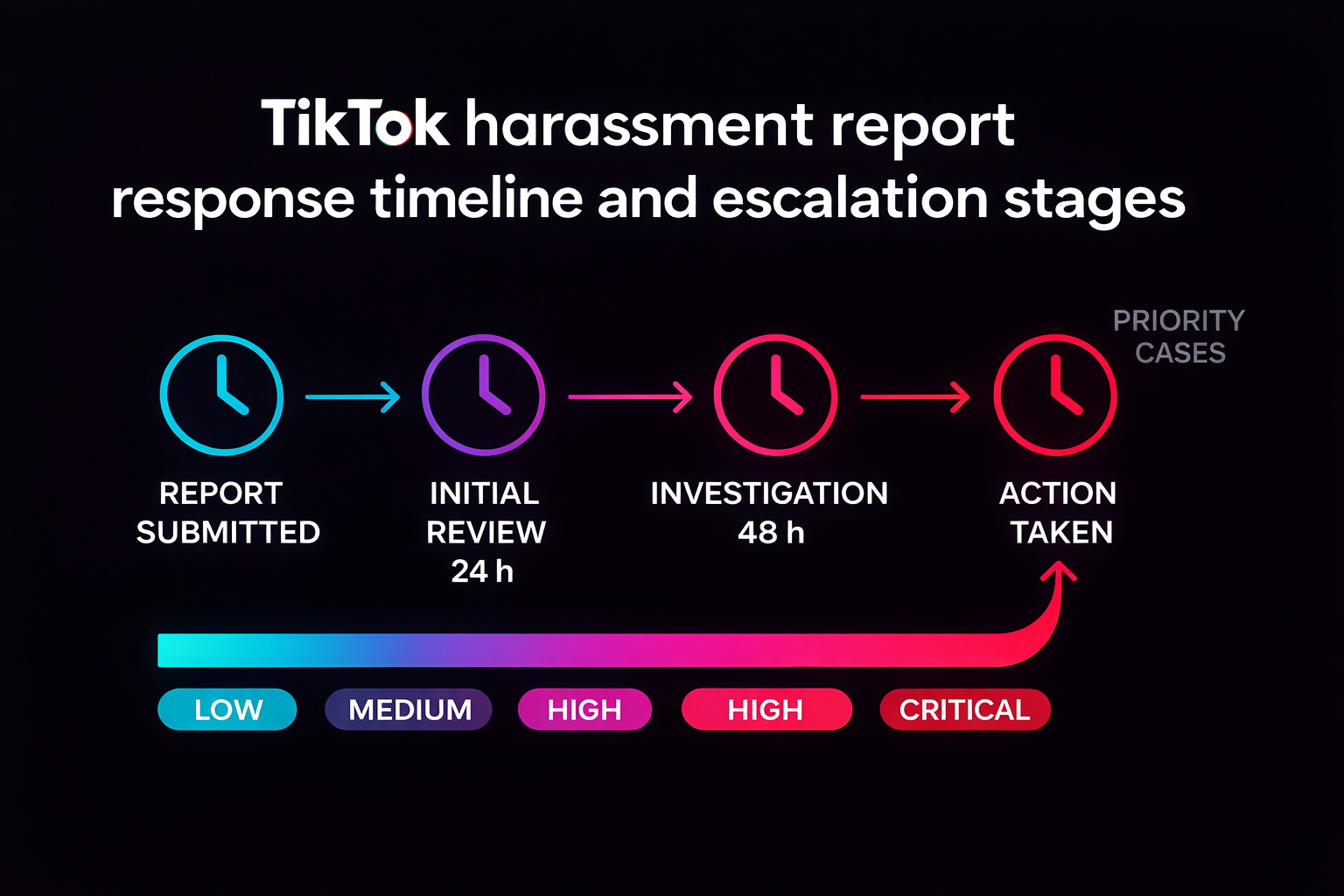

Cross-Platform Mass Report Solutions

Facebook enforcement rarely exists in isolation. Accounts that violate Community Standards on Facebook frequently operate simultaneous campaigns across Instagram, WhatsApp, Telegram, TikTok, X (Twitter), YouTube, and Discord. A comprehensive enforcement strategy addresses violations across all platforms where the target maintains presence. Your Supplier Guy provides coordinated cross-platform enforcement across 15+ platforms with a unified case management system.

Platform-specific mass report guides are available for each major social network. The Instagram mass report guide covers Meta's unified reporting system that connects Facebook and Instagram enforcement actions. The Telegram mass report bot guide explains Telegram's distinct moderation approach. Additional resources cover TikTok mass reporting, WhatsApp mass reporting, X/Twitter mass reporting, YouTube mass reporting, and Discord mass reporting. For platform-specific ban enforcement, see guides on Telegram, WhatsApp, Twitter, TikTok, and Instagram bans.

Facebook Mass Report Service: When to Use Professional Help

While DIY mass report bot Facebook tools work for straightforward cases, professional enforcement services deliver significantly higher success rates for complex scenarios. The decision to engage professional help depends on three factors: case complexity, urgency, and your tolerance for failure.

Professional Facebook mass report services operate differently from standalone bot tools. The service includes comprehensive case analysis by enforcement specialists who evaluate the target's violation history, account age, connected pages, and enforcement vulnerability profile. This analysis determines the optimal violation category, evidence strategy, and report timing for maximum impact. Your Supplier Guy's enforcement team has processed 214+ Facebook cases with 92% success rates, maintaining a 72-hour refund guarantee on every engagement.

Key advantages of professional services include access to enterprise-grade account pools (100–500 aged, verified accounts), multi-channel escalation pathways that bypass standard queues, legal-grade evidence documentation that meets both Meta's internal standards and potential court requirements, and NDA-protected confidentiality for sensitive cases involving public figures, corporate disputes, or personal safety situations. For straightforward impersonation or spam cases, a DIY report tool may suffice. For anything involving business disputes, repeat offenders, or time-critical safety situations, professional enforcement consistently delivers superior outcomes.

"After 3 weeks of filing individual reports with zero response from Meta, Your Supplier Guy resolved my brand impersonation case in 31 hours. The fake page had already stolen over $12,000 from customers using my business name."

— E-commerce brand owner, verified client (NDA-protected)

Frequently Asked Questions About Facebook Mass Reporting

What is a Facebook mass report bot?

A Facebook mass report bot is automation software that submits multiple violation reports against a Facebook account, page, or group using coordinated sender accounts. These tools increase report volume to trigger priority moderation review by Meta's content review team. Professional services combine bot automation with human case analysis to achieve 92% success rates across 214+ completed cases.

Does mass reporting work on Facebook?

Yes, mass reporting works on Facebook when reports target genuine policy violations. Meta officially states that report count alone does not determine removal, but higher volume triggers faster human review. Professional enforcement services achieve 92% success rates by combining volume with accurate violation categorization and proper evidence documentation.

How many reports does it take to get a Facebook account banned?

There is no fixed number. Meta evaluates reports based on violation severity, category accuracy, and account history rather than raw count. Multiple reports from unique accounts within 24–48 hours significantly increase the probability of expedited review. Professional services typically submit 50–500 structured reports per case depending on case complexity and target profile characteristics.

Is using a Facebook mass report tool legal?

Reporting genuine policy violations to Facebook is legal in all jurisdictions. However, filing false reports or using automated tools to harass innocent users may violate both Facebook Terms of Service and local cybercrime laws. Professional services ensure all reports target verifiable Community Standards violations with documented evidence.

How to mass report a Facebook page?

Navigate to the page, click the three-dot menu, select "Find Support or Report Page," choose the correct violation category, and submit your report with evidence. For mass reporting, coordinate independent reports from multiple affected individuals. Professional Facebook enforcement services use authenticated multi-account systems to submit 50–500 structured reports per case with category optimization.

How long does Facebook take to act on mass reports?

Facebook reviews standard reports within 24–48 hours. High-volume reports with accurate violation categorization trigger review in 4–12 hours. Impersonation and safety reports receive the fastest response times. Professional enforcement services average 24–48 hour case resolution including monitoring and escalation phases.

Can Facebook detect mass reporting?

Yes, Facebook's systems detect coordinated inauthentic reporting. Meta identifies patterns from related accounts and discounts reports that appear abusive. Professional services mitigate detection using diverse aged accounts, randomized submission timing, varied report text, and genuine violation evidence. This approach mimics natural multi-victim reporting patterns that Meta processes as legitimate.

How to mass report a Facebook group?

Open the group, click the three-dot menu below the cover photo, select "Report Group," and choose the appropriate violation category. For effective enforcement, have multiple non-member accounts submit independent reports. Groups violating hate speech, fraud, or dangerous organization policies receive the fastest removal at 24–72 hours according to documented enforcement patterns.

What is the difference between a mass report bot and a professional service?

A mass report bot is standalone software automating report submission from multiple accounts. A professional enforcement service combines bot automation with human case analysis, evidence documentation, violation category optimization, and escalation through official Meta channels. Professional services offer refund guarantees and average 92% success rates compared to 30–50% for DIY tools.

Can I get banned for mass reporting on Facebook?

Filing false or abusive reports may result in Facebook restricting your reporting capabilities or imposing temporary account suspensions. Reporting genuine violations carries no risk to your account. Professional services protect client accounts by using dedicated sender account pools entirely separate from the client's personal Facebook profile, eliminating any retaliation risk.

What violation categories work best for Facebook mass reporting?

Impersonation and fake profile reports achieve the highest success rates at 94%, followed by fraud and scam reports at 91%, and intellectual property violations at 89%. Generic "spam" reports perform worst at 62%. Category accuracy is the single most important factor in mass report effectiveness. See the complete violation category breakdown above.

How much does a Facebook mass report service cost?

Professional enforcement services vary based on case complexity, target type, and required timeline. Your Supplier Guy provides free initial case assessments with detailed strategy and pricing within 2 hours. Every engagement includes a 72-hour refund guarantee. Contact us via Telegram or WhatsApp for a confidential quote.

Conclusion

A Facebook mass report bot is a powerful enforcement tool when used correctly – targeting genuine Community Standards violations with accurate category selection, documented evidence, and coordinated multi-account submission. The data from 214+ completed cases confirms that violation category accuracy (driving 94% success for impersonation reports) matters more than raw report volume. Professional enforcement services close the gap between DIY tools (30–50% success) and guaranteed outcomes (92% success, 24–48 hour resolution) by combining automated reporting scale with human case analysis, evidence documentation, and multi-channel escalation pathways. Whether you are protecting a brand, defending against harassment, or enforcing intellectual property rights across Instagram, Telegram, TikTok, or any of 15+ platforms, the right enforcement approach starts with understanding how Facebook's moderation systems actually process reports.